How AI Is Transforming Delegation of Authority: Why Enterprise Governance Must Extend to Agents

AI agents are no longer experimental. They are approving expenses, routing procurement decisions, executing trades, and managing customer commitments across enterprise platforms. Yet in most organizations, the governance framework that defines who can approve what — the delegation of authority — was designed for a world where every decision-maker was human. That gap between what AI agents can do and what they are authorized to do is creating a new category of enterprise risk.

This is not a technology problem. It is a governance architecture problem. The same delegation of authority frameworks that govern human decision-making must now extend to autonomous agents — with the same rigor around limits, conditions, accountability, and auditability. The organizations that adapt their authority governance first will deploy agentic AI faster and more safely. Those that do not will face regulatory exposure, unauditable decisions, and a growing trust deficit between the board and the systems operating on its behalf.

Why Does AI Require Enterprise Authority Governance, Not Just AI Guardrails?

AI agents require enterprise authority governance because they are making business decisions that carry financial, legal, and reputational consequences — the same consequences that justify formal delegation frameworks for human decision-makers.

The scale of agent adoption makes this urgent. A 2025 SailPoint survey found that 80% of organizations report unintended AI agent actions, while 98% plan to expand agent use. The OWASP Foundation elevated Excessive Agency to the sixth-highest risk in its 2025 LLM Top 10 — recognizing that AI systems with insufficient authority boundaries represent a systemic enterprise vulnerability.

Traditional AI guardrails — model safety filters, prompt engineering, output monitoring — address what an agent can do technically. Authority governance addresses what an agent should be authorized to do on behalf of the organization. These are fundamentally different questions. An AI procurement agent might have the technical capability to approve a $500,000 purchase order, but should it? Under whose delegation? With what approval threshold? And who bears accountability when that authority is exercised?

Without a structured delegation of authority framework that governs agent decisions alongside human decisions, organizations end up with authority boundaries buried in system configurations, invisible to auditors, and disconnected from the governance structures their boards have approved.

What Regulatory Forces Are Making AI Authority Governance Mandatory?

Multiple regulatory frameworks are converging on the same requirement: organizations must document, trace, and maintain human oversight over AI-mediated decisions — which demands a governed authority infrastructure.

The EU AI Act, with high-risk system obligations taking effect August 2026, requires authority documentation (Article 9), record-keeping and traceability (Article 12), transparency (Article 13), and human oversight (Article 14) for AI systems making consequential decisions. The UK Corporate Governance Code’s Provision 29, effective January 2026, requires boards to declare the effectiveness of their material internal controls — which now includes AI systems operating within delegated authority.

Singapore’s Model AI Governance Framework for Agentic AI, published in January 2026 as the world’s first government-backed framework specifically for agentic AI, prescribes four governance actions: assess and bound risks upfront, increase human accountability, implement technical controls, and enable end-user responsibility. It explicitly requires least-privilege access, human approval checkpoints for sensitive actions, and bounded authority — precisely the capabilities that a delegation of authority policy provides when extended to AI agents.

A February 2026 Gartner analysis projected that global AI regulations will fuel a billion-dollar-plus market for AI governance platforms by 2028, up from $492 million in 2025. The regulatory floor is rising, and delegation of authority governance sits at the center of what compliance will require.

What Is the Authority Layer, and Why Does AI Make It Essential?

The authority layer is the enterprise governance infrastructure that defines who — human or agent — can approve, sign, and commit on behalf of the organization, under what conditions and limits.

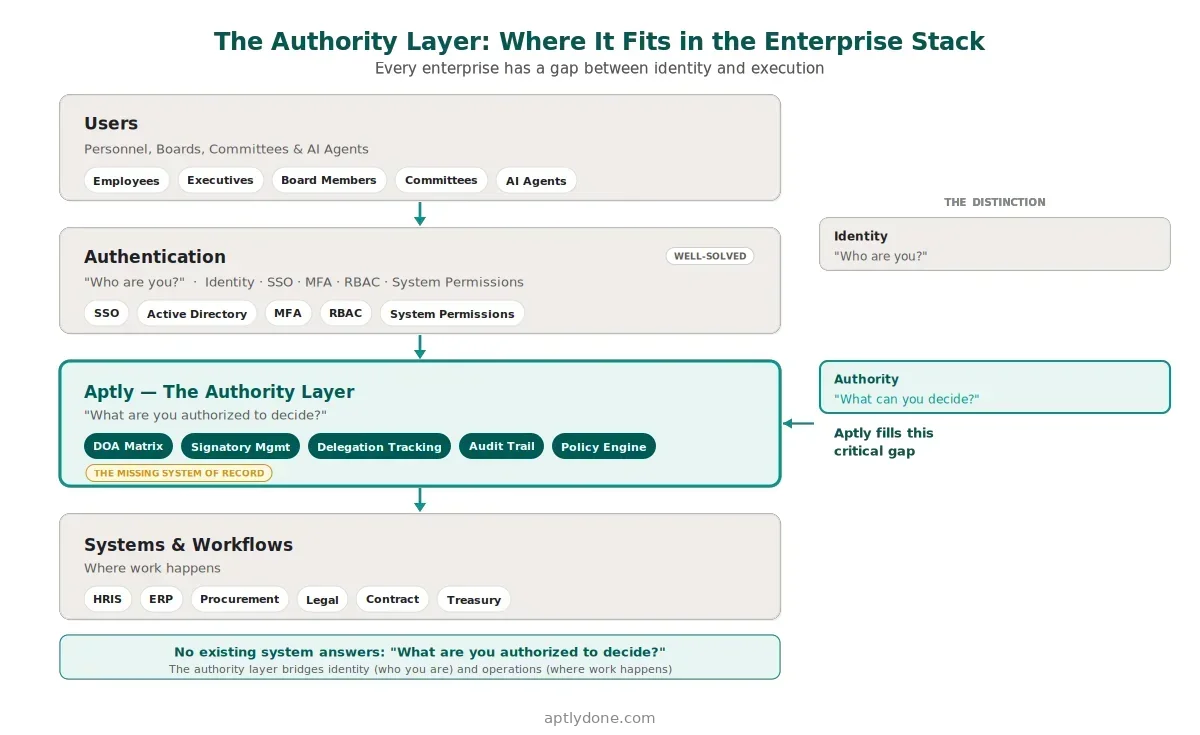

Definition: Authority layer — A centralized system of record for enterprise decision rights that sits between identity systems (who are you?) and execution systems (where work happens), governing what each principal is authorized to decide. With AI agents joining the workforce, this layer must govern both human and machine decision-makers.

Every enterprise technology stack already has mature solutions for identity (Okta, Entra ID) and for execution (ERP, CRM, procurement, ITSM). What has been missing is the governance layer in between — the layer that answers not “can you access this system?” but “are you authorized to make this decision?” For human employees, that question was historically answered by spreadsheet-based DOA matrices and PDF policy documents. A 2025 EY and Society for Corporate Governance survey found that 78% of organizations still host their delegation of authority on the company intranet, while only 14% embed it in a dedicated IT system.

That approach was already fragile for human governance. For AI agents, it is unworkable. Agents cannot read a PDF. They need machine-readable authority boundaries — scoped limits, conditions, escalation rules, and expiration dates — delivered through API-accessible infrastructure. A platform like Aptly provides exactly this: a governed authority registry where delegation records apply to both human roles and AI agents, with authority decisions consumable by the systems where agents operate. Google DeepMind’s February 2026 delegation framework research confirmed that existing AI protocols lack adequate governance layers for deep delegation chains — validating the need for purpose-built authority infrastructure.

How Should Boards Think About Accountability for AI-Mediated Decisions?

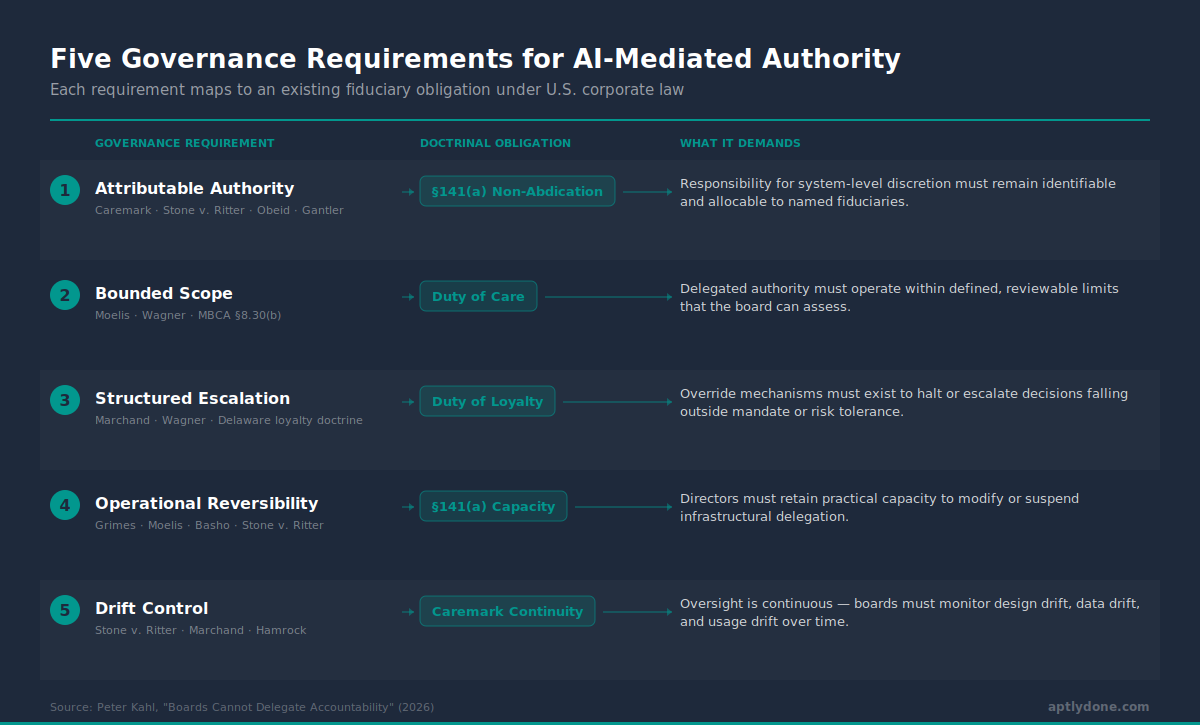

Boards must treat AI-mediated decisions with the same governance rigor as human-mediated decisions — because fiduciary obligations do not transfer to algorithms.

This is the central argument Peter Kahl makes in his analysis of board accountability and AI-mediated authority: under U.S. corporate law, boards can delegate authority but cannot delegate accountability. The Caremark oversight doctrine requires directors to maintain information and reporting systems reasonably designed to provide timely, accurate information. When AI agents execute decisions under delegated authority, that accountability obligation does not diminish — it intensifies, because the decisions happen faster, at greater scale, and with less inherent human visibility.

Yet most organizations lack the infrastructure to satisfy this requirement. Deloitte’s 2026 State of AI report, which surveyed 3,235 leaders across 24 countries, found that only 21% of companies have a mature governance model for autonomous AI agents, and 69% say fully implementing a governance strategy will take over a year. That timeline creates a window of exposure: agents are being deployed now, but the governance structures to oversee them are still months or years away.

A delegation of authority framework that governs both humans and agents closes this gap by ensuring every AI-mediated decision has an identifiable human delegator, documented authority boundaries, and a complete audit trail connecting the agent’s action to the governance policy that authorized it.

What Does an AI-Ready Delegation of Authority Framework Look Like?

An AI-ready delegation of authority framework extends traditional human governance to agents through machine-readable authority boundaries, real-time enforcement, and accountable escalation.

The framework must address three requirements that static DOA matrices cannot. First, authority must be machine-readable — not trapped in PDF documents or spreadsheet cells, but structured as queryable records that agent platforms can check at runtime before executing a decision. Second, authority must be bounded and conditional — AI agents need scoped limits (monetary thresholds, transaction types, entity scope, time windows) that mirror the conditions organizations already apply to human delegations. Third, authority must include human escalation routing — when an agent encounters a decision that exceeds its delegated boundaries, the system must route that decision to the correct accountable human based on the delegation hierarchy, not simply halt execution.

For organizations beginning this transition, the practical starting point is extending the existing delegation of authority framework rather than creating a parallel AI governance structure. The same DOA matrix structure that defines human approval thresholds can define agent thresholds. The same delegation chains that establish human accountability can designate who bears accountability for agent decisions. The same audit trail that records human authority exercises can record agent authority checks. For a deeper look at how delegation operates in practice, see our companion piece on managing authority in the age of agentic AI, which covers specific agent delegation scenarios and operational implementation.

Does AI governance require a separate authority framework from human delegation?

No. The most effective approach extends the existing delegation of authority framework to include AI agents as additional principals alongside human roles. This ensures agents operate under the same governance structure — with the same accountability chains, escalation rules, and audit requirements — that the organization already applies to human decision-makers. Maintaining a single authority framework prevents governance fragmentation and simplifies audit and compliance.

Which regulations specifically require authority governance for AI systems?

The EU AI Act (high-risk obligations effective August 2026) requires record-keeping, traceability, and human oversight for AI systems making consequential decisions. The UK Corporate Governance Code’s Provision 29 (effective January 2026) requires board declarations on internal control effectiveness, which now encompasses AI systems. Singapore’s Model AI Governance Framework for Agentic AI (January 2026) explicitly prescribes bounded authority and human approval checkpoints. The OWASP LLM Top 10 (2025 edition) identifies Excessive Agency as a critical risk requiring formal authority boundaries.

How does authority governance for AI differ from identity governance for AI?

Identity governance (Okta, Entra ID, CyberArk) controls which systems an AI agent can access — can it reach the procurement platform? Authority governance controls what the agent is authorized to do once inside — can it approve a $500K purchase order? Identity answers “who are you?” while authority answers “what can you decide?” Both layers are necessary; neither is sufficient alone.

Sources

- SailPoint / Dimensional Research. "AI Agents: The New Attack Surface." May 2025.

- OWASP Foundation. "Top 10 for Large Language Model Applications." 2025.

- EU AI Act. "Article 14: Human Oversight." High-risk obligations effective August 2026.

- Singapore IMDA. "Model AI Governance Framework for Agentic AI." January 2026.

- Gartner. "Global AI Regulations Fuel Billion-Dollar Market for AI Governance Platforms." February 2026.

- EY and Society for Corporate Governance. "The Delegation Edge." January 2025.

- Google DeepMind. "Intelligent AI Delegation." February 2026.

- Deloitte. "State of AI in the Enterprise 2026." 2026.

- Peter Kahl. "Boards Cannot Delegate Accountability." 2026.